October was WILD for AI releases. If you blinked, you missed at least 10 game-changing updates to the vibe coding tools that power modern product development.

Five of the biggest AI companies, Cursor, Anthropic, Google, OpenAI, and Figma, didn't hold back last month. Each release signals a fundamental shift in how we think about building software.

But these weren't just incremental improvements. These updates reveal where the entire industry is heading: away from traditional coding, toward AI-orchestrated development workflows.

Here's the complete breakdown of what dropped, why it matters, and how it affects your workflow as a product builder.

Key Takeaways

- Cursor 2.0 shifts the IDE paradigm to agent-first interface, making developers AI orchestrators rather than code writers

- Major AI companies (Cursor, Anthropic, Google, OpenAI, Figma) are moving away from general-purpose chat toward specialized builder-focused tools

- Claude Skills and custom MCPs enable professional-grade AI assistance by encoding domain expertise that generic LLMs lack

- The Product Designer/Engineer/Manager roles are converging into unified "product builders" who execute across all domains using AI tools

- Education investment is now critical as companies like Cursor launch dedicated learning platforms, signaling that vibe coding requires systematic skill development

Learn this hands-on

Ready to ship a real production app, not just pick a model? Check out the Master Course: Build and Ship a Production-Ready App with Lovable and Cursor.

Cursor: The Shift from Code Editor to AI Orchestrator

Cursor delivered two massive updates that fundamentally change what an IDE looks like in the AI era.

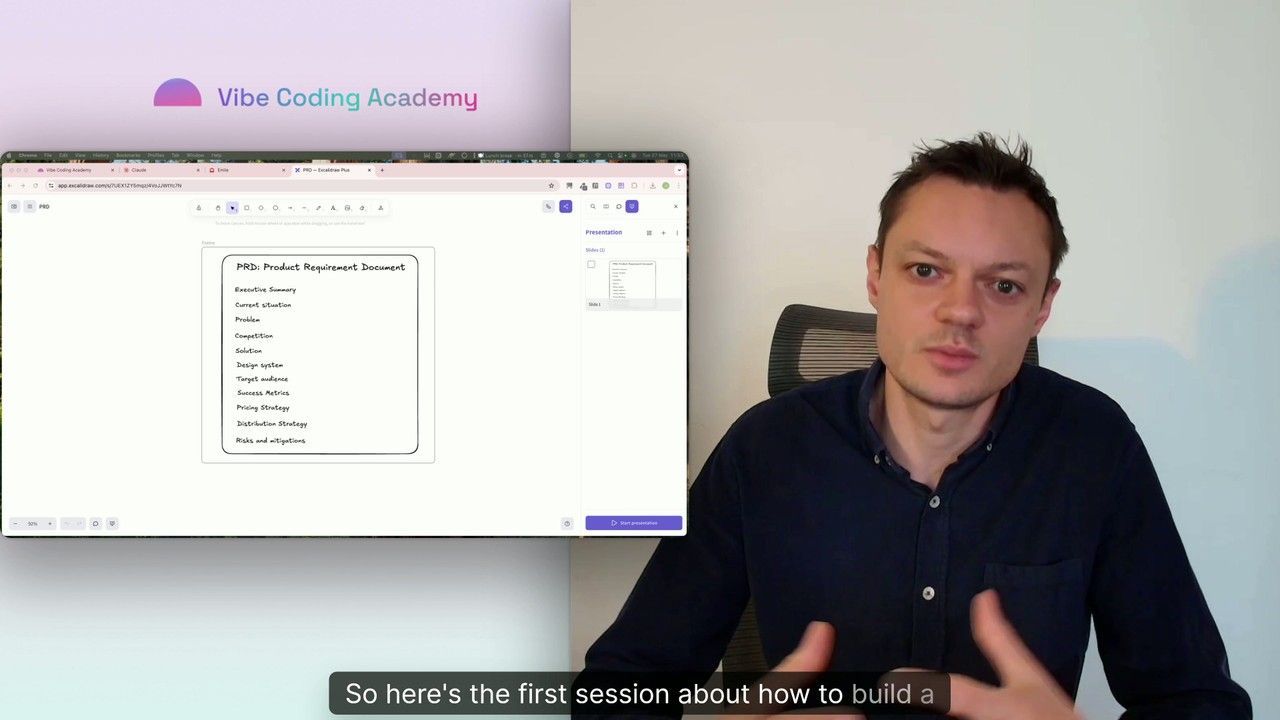

Cursor Learn: Institutionalizing AI-Assisted Development Education

First, Cursor Learn launched with Lee Robinson (ex-Vercel VP) as their VP of Developer Education. This isn't just a blog or documentation site, it's a dedicated education platform designed to help developers master AI-assisted development.

The significance? Cursor is acknowledging that vibe coding requires different skills than traditional development. They're investing in systematic education because the transition from writing code to orchestrating AI agents isn't intuitive.

If a major IDE is building an entire education division around this paradigm shift, that tells you something about the permanence of this change.

Cursor 2.0: Agents First, Files Second

The Cursor 2.0 UI overhaul is even more telling. The interface now centers on multi-agent workflows instead of files.

Similar to Warp's terminal redesign, most of the Cursor window now focuses on the agent interface first. Your file tree and code editor become secondary to your conversation with AI agents.

This shift reveals the new paradigm explicitly: developers are becoming AI babysitters rather than code writers.

Your primary job isn't writing functions anymore, it's:

- Articulating what you want the AI to build

- Reviewing AI-generated code for correctness

- Orchestrating multiple AI agents toward a coherent outcome

- Making architectural decisions the AI can't make alone

The interface change makes this workflow explicit. You're not opening files to write code. You're directing agents that write code for you.

Composer: Cursor's Speed-Optimized Model

Cursor also launched Composer, their proprietary model built specifically for speed. Think of it as a direct competitor to Claude's Haiku 4.5.

The value proposition is clear: handle simple, repetitive coding tasks efficiently to lower your AI bill without sacrificing quality on straightforward work.

The open question is whether Cursor can compete with companies whose core competency is model development. Building great developer tools is different from building great AI models. But the strategic move makes sense, controlling more of the stack means better optimization for their specific use case.

For product builders: Expect faster responses on routine tasks like refactoring, bug fixes, and boilerplate generation. Save the powerful (expensive) models for complex architectural decisions.

Claude Skills: Your Expertise, Automatically Accessible

Beyond the VS Code extension, /rewind command, and Haiku 4.5, Anthropic launched Claude Skills, one of the most strategically important updates for serious product builders.

Claude Skills lets you extend Claude's capabilities by sharing specific expertise it can reference when needed. Think design systems, coding standards, architectural patterns, or domain-specific knowledge.

The implementation is elegant: you define a skill once, and Claude autonomously decides when to leverage it based on context. You don't manually invoke skills, Claude recognizes when they're relevant.

Why this matters for vibe coding tools: This is the productized version of what advanced users have been doing manually with custom instructions and context files. It's Anthropic acknowledging that generic AI assistance isn't enough for professional work.

Professional builders have specific standards, proven patterns, and accumulated wisdom that generic LLMs lack. Skills make that expertise portable and automatically applicable. To master the full range of Claude Code capabilities, including advanced skills usage, check out our dedicated Claude Code series.

Related Course on Vibe Coding Academy

The limitation: You can't directly call skills. Claude decides when they're relevant. This autonomous approach is double-edged, less control, but also less cognitive overhead remembering when to apply which expertise.

Combined with custom MCPs for encoding deeper expertise, Claude Skills represents the maturation of AI assistance from general-purpose chat to specialized professional tooling.

Google AI Studio: From Chat Toy to Builder Platform

Google made a strategic repositioning with their complete Google AI Studio UX overhaul, and it's telling.

New Models: VEO 3.1 and Imagen 4.0

First, the model updates:

- VEO 3.1 for video generation

- Imagen 4.0 for image generation (reportedly better than competitors)

But the real story is the interface redesign, which reveals Google's strategic bet.

The Builder-First Interface Redesign

Google AI Studio's new UX has two key focuses:

1. Explicit model selection before chatting: You now choose whether you're generating images, video, or text before starting your session. This prevents the "multipurpose chat" experience that encourages exploration over execution.

2. Heavy emphasis on building vs. chatting: They're positioning themselves closer to Cursor than ChatGPT, pushing users to ship actual prototypes instead of just experimenting with AI for fun.

This is a fascinating strategic move. While ChatGPT optimizes for engagement through open-ended conversation, Google is optimizing for retention through building habits.

The bet: users who build something they can deploy will return more consistently than users who just chat for entertainment.

For product builders: Google AI Studio is becoming a legitimate option in your vibe coding tools stack, especially if you need integrated image/video generation alongside code.

OpenAI: AgentKit and the No-Code AI Pipeline Play

OpenAI launched AgentKit, which bundles two distinct capabilities targeting different use cases.

Agent Builder: The N8N Competitor

Agent Builder is OpenAI's take on visual AI workflow creation. Think of it as an AI-focused n8n competitor.

The target user isn't developers, it's product builders who want to orchestrate AI agents without writing code. String together API calls, transformations, and AI model interactions through a visual interface.

This positions OpenAI directly against workflow automation platforms while leveraging their model advantage. If the workflows are primarily AI-driven, why not build them in the platform that controls the most powerful models?

ChatKit: Embedded AI Features Made Easy

ChatKit provides pre-built tools for adding chat features to your application. Instead of building custom interfaces for GPT integration, you get standardized components that work out of the box.

The strategic play is obvious: make it so easy to add OpenAI-powered features to your app that you don't consider alternatives.

Veo 2: OpenAI's Video Generation Entry

OpenAI also launched Veo 2, their latest video generation model, entering direct competition with Google's VEO 3.1 and other video generation players.

Bottom line for product builders: AgentKit lowers the barrier to AI integration significantly. If you've been postponing adding AI features because the implementation complexity seemed high, these tools remove that excuse.

Figma Weave: Keeping Designers in the Ecosystem

Figma made a major play to prevent designer exodus with Figma Weave, following their acquisition of Weavy.

What Is Figma Weave?

Figma Weave is a node-based system for generating images and video, essentially "n8n for visual content." You can string together prompts, transformations, and style applications through a visual workflow interface.

The strategic intent is transparent: keep designers inside the Figma ecosystem instead of jumping to Midjourney, Runway, or other specialized AI generation tools. For a hands-on introduction to this design-to-code bridge, watch our lesson on the Figma MCP Server and its workflows.

Related Lesson on Vibe Coding Academy

If you can generate, iterate, and refine AI-generated visual assets without leaving Figma, why would you maintain subscriptions to multiple separate tools?

Dylan Field's Product Builder Thesis

Figma CEO Dylan Field articulated something that's been increasingly evident: "Everyone's a product builder now."

He's observing the Product Designer, Product Engineer, and Product Manager roles merging into one unified product builder role.

This isn't hypothetical future-thinking. It's happening now. The traditional boundaries between these disciplines are dissolving because AI tools enable individuals to execute across all three domains.

You no longer need a designer to create high-fidelity mockups, an engineer to implement them, and a PM to coordinate between them. One product builder with the right vibe coding tools can move from concept to deployed feature.

This is exactly why learning vibe coding matters regardless of your technical background. The role boundaries are disappearing.

Everyone's a product builder now.

The Emerging Pattern Across All Updates

Look across all five companies' October releases, and a clear pattern emerges:

1. Building beats chatting: Cursor, Google, and OpenAI are all emphasizing production outputs over exploratory conversation.

2. Specialization over general-purpose: Claude Skills, Figma Weave, and Cursor Composer all acknowledge that generic AI assistance isn't enough for professional work.

3. Ecosystem lock-in through integration: Every company is making it easier to stay within their platform than to integrate multiple tools.

4. Education as strategic investment: Cursor Learn signals that mastering these tools requires systematic learning, not just intuitive exploration.

5. Role convergence acceleration: The Product Designer/Engineer/Manager distinction is collapsing faster than expected.

What This Means for Your Vibe Coding Tools Stack

Based on these October updates, here's how to think about your current stack:

For prototyping and UI work: Figma Weave now keeps you in one ecosystem. Evaluate if leaving Figma for specialized tools still makes sense.

For AI-assisted coding: Cursor 2.0's agent-first interface is the future. If you're still thinking in terms of "files I edit," your mental model is outdated.

For encoding expertise: Claude Skills + custom MCPs represent the professional tier of AI assistance. Generic prompting is amateur hour.

For workflow automation: OpenAI's AgentKit and n8n are converging on similar territory. Choose based on whether you're AI-first or integration-first.

For staying current: The pace of updates is unsustainable to track manually. You need systematic education (like Cursor Learn or Vibe Coding Academy) to keep your skills relevant.

Related Course on Vibe Coding Academy

The Vibe Coding Tools Landscape Is Maturing Fast

Six months ago, we were figuring out basic prompting techniques. Now we're orchestrating multi-agent workflows, encoding professional expertise into AI-accessible formats, and shipping production applications in days.

The October updates aren't just incremental improvements. They represent the maturation of vibe coding tools from experimental novelties to professional development platforms.

The implications for product builders:

- Skill requirements are shifting from writing code to orchestrating AI agents

- Tool specialization is increasing even as individual capabilities broaden

- Role boundaries are dissolving between traditional product disciplines

- Education investment is becoming critical to keep pace with changes

- Distribution remains the bottleneck, not building capability

If you're still approaching these tools like enhanced autocomplete, you're missing the fundamental shift. These aren't coding assistants anymore, they're vibe coding tools that fundamentally change what building software means.

The companies shipping these updates understand this. That's why they're investing in education platforms, redesigning interfaces around agent workflows, and enabling expertise encoding rather than just better autocomplete.

The question isn't whether to adopt these tools. It's whether you're adopting the mindset shift they enable.

October's releases make one thing clear: the future of product building isn't writing better code. It's orchestrating AI agents more effectively while focusing on the problems that still require human judgment, like building distribution advantages that make your products actually succeed.

What October update will change your workflow most? The answer depends on whether you're ready to think like a product builder instead of a traditional developer.

Stay ahead of the vibe coding tools evolution. Join Vibe Coding Academy to master the systematic approach to AI-powered product building.