The gap between Claude Code and Codex CLI is smaller than it has ever been. GPT 5.5 closed the coding quality gap with Opus 4.7 significantly, and OpenAI's Codex CLI has matured into a real alternative rather than a curiosity. If you are choosing your primary AI coding tool in 2026, you owe it to yourself to run both through their paces before committing.

We put Opus 4.7 and GPT 5.5 head-to-head inside their native CLIs across nine categories: usability, cost and token efficiency, planning, greenfield builds, init command, code review, context management, memory, and ecosystem. Here is what the data actually shows.

According to the 2025 Stack Overflow Developer Survey, 84% of developers are already using or planning to use AI tools in their workflow. The question is not whether to use a CLI agent. It is which one fits your workflow. If you want the broader picture across IDEs, prototyping tools, and agents, the complete AI coding tools comparison for 2026 covers the full landscape.

Key Takeaways

- GPT 5.5 closed the coding quality gap with Opus 4.7 significantly, making Codex CLI a genuine alternative rather than a curiosity in 2026.

- Codex CLI uses roughly half the tokens for the same work (82k vs 173k), making it the clear winner on cost for daily workloads.

- Claude Code's planning depth and ecosystem (hooks, isolated worktrees, ultrathink) remain its strongest differentiators for complex architectural tasks.

- Claude Code 2.1.0 introduced usability regressions, including terminal glitches and permission prompts that stall long-running tasks, while Codex's Rust UI stays smooth.

- Codex ships with pre-installed agent browser and skill creator capabilities plus YOLO mode for uninterrupted execution; Claude Code requires separate installation.

- Sub-agent context philosophy differs fundamentally: Claude Code uses isolated worktrees for predictability, while Codex inherits full conversation history for better research continuity.

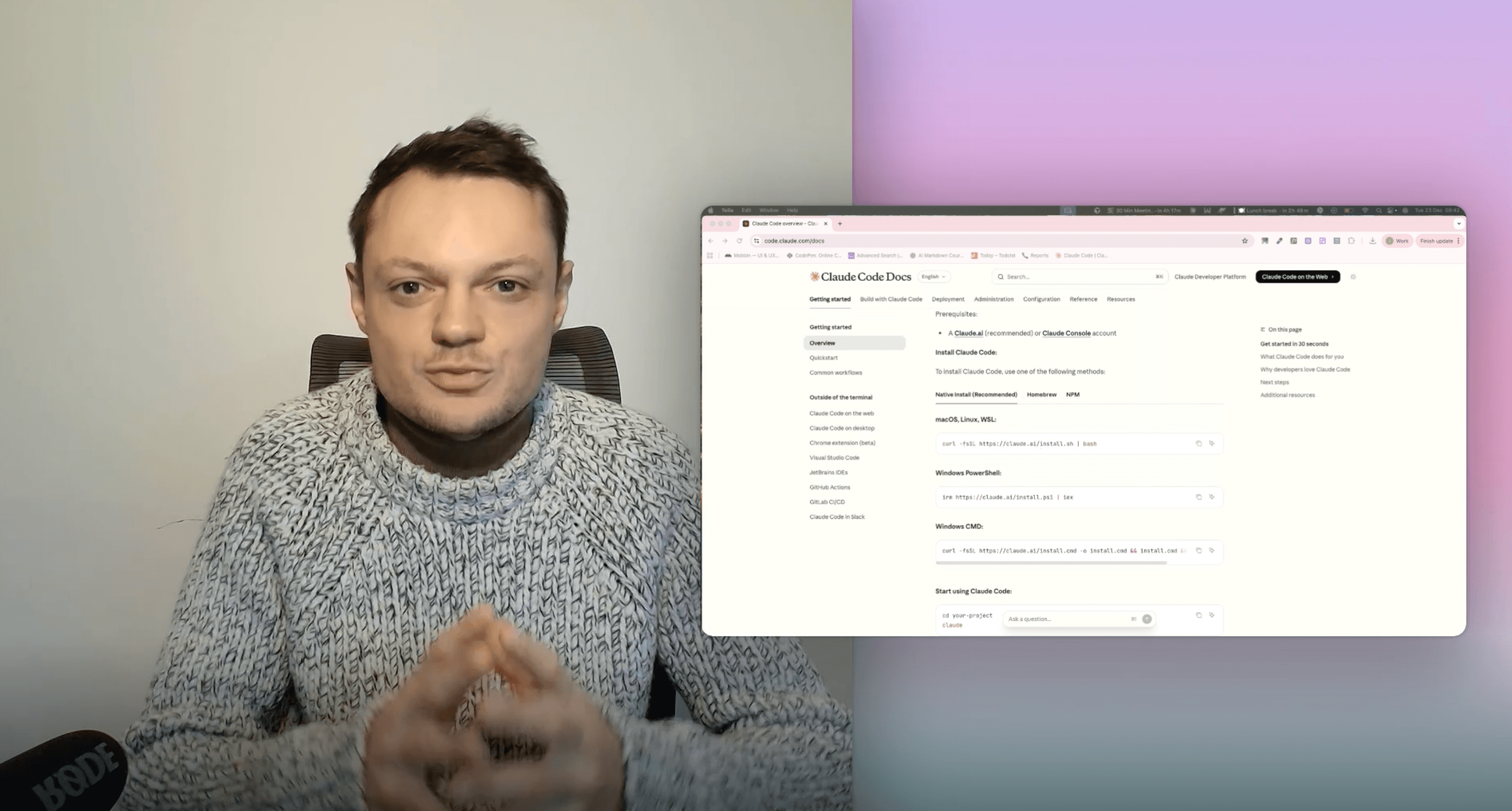

Learn this hands-on

Claude Code wins? Learn to get the most out of it with 8 hands-on video lessons. Check out the How to Master Claude Code: Ship Code Faster & Build AI Agents.

Quick Verdict

| Category | Winner |

|---|---|

| Usability | Codex CLI |

| Cost & Token Efficiency | Codex CLI |

| Planning Depth | Claude Code |

| Greenfield Build Quality | Tie (depends on priority) |

| Init Command | Codex CLI |

| Code Review Detail | Claude Code |

| Context Management | Codex CLI |

| Memory | Codex CLI (cross-project) |

| Ecosystem & Features | Claude Code |

| Sub-agents | Codex CLI (in practice) |

There is no single winner. Claude Code wins on ecosystem depth and planning quality. Codex wins on usability, cost, and day-to-day session performance. Read on for the full breakdown.

Usability: Where Claude Code Is Breaking Down

Usability is where Claude Code has been struggling since the 2.1.0 update. The terminal glitches, rendering breaks, and cache leak bugs affect the experience noticeably. What used to be one of the best terminal UIs now has rough edges in ways that compound across a long session.

The permission model shift made things worse. Anthropic replaced the "dangerously skip permissions" bypass mode with auto mode, but auto mode still prompts for permissions in ways the old bypass never did. In practice, if you start a long task and switch to another session, you might return to find the task stalled at a permission prompt, waiting for input that never came.

Codex CLI handles this better. Its YOLO mode runs without any permission interruptions. The CLI is built on Rust rather than Claude Code's React-based setup, so the UI is smoother and nothing breaks mid-session. Codex also supports direct personality configuration. If GPT 5.5's default sycophantic tone bothers you (it is noticeably more agreeable than Opus 4.7), you can dial it back with a setting. In Claude Code, you do that through CLAUDE.md instructions, which works but is less immediate.

Pre-installed skills are another gap. Codex ships with an agent browser skill built in, meaning it will automatically open a browser to verify UI changes after implementing a feature. It also ships with a skill creator that generates properly structured skills on demand. Claude Code has both capabilities but requires you to install them separately.

Where Claude Code still leads on usability: rewinding. Codex does not support reverting to an earlier state mid-session, and the ability to rewind is genuinely valuable when the model takes a wrong turn. Claude Code also lets you expand the model's thinking output with Ctrl+O, which Codex does not support cleanly. Seeing the reasoning mid-task lets you correct the approach before the implementation finishes.

Point: Codex CLI

Cost and Token Efficiency: A 2x Gap

This is where the numbers get stark. Running the same app build and debugging session on both tools, Opus 4.7 consumed 173,000 tokens while GPT 5.5 used 82,000. That is more than a 2x difference for the same amount of work.

The cost gap compounds with Claude Code's subscription model. Claude Code is not available on the free tier at all. The Pro plan hits its limits so fast on real workloads that it is effectively not usable for meaningful projects. The Max plan is the practical minimum, and even that runs dry faster than expected on heavy usage.

Codex is available on the free plan with limited usage. When you do exhaust the limits, you have stretched them further per task than you would with Claude Code.

Point: Codex CLI

Planning: Depth vs Speed

Planning is a clear win for Claude Code. When given the same task (build a frontend for an existing FastAPI backend), Codex's plan was clean and organized but thin. It identified the main flow, the key pages, and explicitly listed its assumptions. That last part is useful. But it did not probe the frontend design direction, which matters for front-end work.

Opus 4.7's plan was significantly more in depth. It covered more of the application's edge cases, considered the component architecture, and pulled in shadcn/ui proactively to improve the user experience. It also takes longer. The same task that Codex planned and executed in 8 minutes took Claude Code 24 minutes. Whether that trade-off is worth it depends entirely on what you are building. For a deeper look at how to get the most out of this planning workflow, the complete guide to Claude Code plan mode walks through how to structure tasks so the planning phase pays off rather than just adding time.

Point: Claude Code

Greenfield Build: Two Different Philosophies

On a greenfield monorepo with a Python Flask backend and Next.js frontend, the two tools revealed their underlying philosophies clearly.

Codex started implementing immediately without entering planning mode. It finished faster, focused on making sure the app functioned correctly, and implemented a fallback mechanism when it realized the API key was not available. The fallback used local responses rather than crashing. That kind of defensive engineering instinct is genuinely useful in production contexts.

Claude Code switched into planning mode automatically and built the app with stronger UI polish. The result looked more like something you would actually ship. But it assumed the API key would be present and did not build any fallback. When the key was missing, the app gave an error instead of degrading gracefully. When debugging was needed, Claude Code also relied more on asking the user to run tests and report back, while Codex's built-in agent browser debugged on its own.

GPT 5.5 feels like a backend engineer: gets the functionality working first, handles edge cases, asks fewer questions. Opus 4.7 feels like a full-stack engineer: builds the UI and backend together, produces more polished output, but requires more available information upfront.

Point: Tie (prioritize based on what you are building)

Init Command: Less Is More

Claude Code's init generates a CLAUDE.md around 90 lines covering architecture, app flow, frontend and backend structure, and run commands. A lot of that information is redundant for the agent and adds context bloat without adding value.

Codex's init output was more focused: commit guidelines, pull request guidelines, security instructions, and a brief project structure section. The goal of an init file is to orient the agent efficiently, not to document everything. Codex's approach is closer to right.

Point: Codex CLI

Code Review: Detail vs Focus

For a reliability code review of the same codebase, Claude Code produced a more detailed report: findings organized by priority, component-level breakdown, exact code snippets tied to each issue. It also surfaced security problems like a leaked API key and a vulnerability.

Codex's report cited line numbers but not the actual code snippets. Both reviews were thorough and each caught things the other missed. The key difference is scope discipline. The task was a reliability review. Claude Code included everything it noticed, including security issues outside the scope. Codex stayed focused on what was asked.

Whether broader is better depends on your use case. For a quick targeted review, Codex's discipline is a feature. For a comprehensive audit where you want the agent to surface anything relevant, Claude Code's tendency to over-report is actually useful.

Point: Split (Claude Code for completeness, Codex for precision)

Context Management and Memory

On compaction, Codex performed better in testing. Claude Code does multi-step context editing that removes old tool calls and reasoning steps. Codex compacts the full conversation as-is, but preserves the last 20,000 tokens without compacting them at all. That preserved tail means Codex comes out of compaction with more usable recent context, and performance degrades less noticeably after a compaction event.

On memory, the two take opposite approaches. Claude Code's memory is project-scoped. What it learns within a project stays in that project. Switch projects and you start fresh. Codex builds cross-session, cross-project memory. Patterns it observes in one project can inform behavior in another. For developers who want consistent behavior across a diverse codebase, Codex's global memory model is more useful.

Point: Codex CLI (both categories)

Ecosystem: Claude Code's Deep Advantage

Claude Code has been in active development longer and shows it. The hook system lets you run your own scripts at specific lifecycle points: before or after a tool call, to block unsafe commands, to run formatters automatically. Sub-agents run in dedicated worktrees so their work does not affect the main session. You can control reasoning effort level and invoke ultrathink to push the model to its maximum depth on a specific task.

The surface area is also wider. Sessions can run through the Claude desktop app and be delegated from the mobile app. Browser extensions add more touchpoints. Codex mainly consists of a web app and a recently released desktop app that was still rough at the time of testing.

Codex has two genuinely distinctive features that Claude Code lacks. First, the attempt flag: runs the same task N times in parallel and selects the best implementation. Claude Code can approximate this through configuration but not as a first-class flag. Second, image generation: Codex can use OpenAI's image models directly in the CLI to generate images for websites it is building. Claude Code has no image model and falls back to SVG-based generation that does not compete on quality.

Point: Claude Code (ecosystem breadth), with a genuine edge to Codex on image generation

Sub-agents: Context Isolation vs Continuity

Both tools support sub-agents, but they approach context differently in ways that matter in practice.

Claude Code gives each sub-agent a completely fresh context window. The sub-agent sees only its prompt, the system prompt, and any global rules. No conversation history. This design is intentional, built around context isolation for predictability. As Thariq Shihipar, Member of Technical Staff at Anthropic, put it: "subagents use their own isolated context windows, and only send relevant information back to the orchestrator, rather than their full context."

Codex takes the opposite approach: sub-agents inherit the full conversation history from the parent, with the new prompt layered on top. In practice, this means Codex sub-agents have more context to work with and can iterate more effectively on tasks where continuity matters.

In testing, Claude Code's isolation hurt research sub-agents specifically. They only saw the immediate prompt and could not leverage prior context. Codex sub-agents, working with the full history, produced better results on those same tasks.

Point: Codex CLI (in practice)

subagents use their own isolated context windows, and only send relevant information back to the orchestrator, rather than their full context.

When to Choose Claude Code

Claude Code is the right tool when:

- You are doing architecture-level planning where Opus 4.7's reasoning depth matters

- You want hooks and lifecycle scripts for safety or automation

- You need sub-agents running in isolated worktrees on complex parallel tasks

- You want the widest surface area across desktop, mobile, and web

- You are building UI-heavy products where Opus 4.7's polish instinct adds visible value

When to Choose Codex CLI

Codex CLI is the right tool when:

- Token efficiency matters (you are working on a limited plan or managing cost carefully)

- You want a stable, smooth terminal experience without UI regressions

- You need autonomous execution without permission interruptions in YOLO mode

- You are building production apps where fallback mechanisms and edge case handling are critical

- You want cross-project memory that accumulates over time

Pros and Cons at a Glance

Here is the full scorecard from the nine categories above, distilled into one place. Use it as a reference when you are deciding between the two for a specific project.

Claude Code (Opus 4.7)

| Pros | Cons |

|---|---|

| Deeper architectural planning and reasoning depth | Roughly 2x more tokens on the same task (173k vs 82k) |

| Rewinding lets you roll back mistakes mid session | Pro and Max plans only, no free tier |

| Visible thinking with Ctrl+O for mid-task corrections | UI regressions since version 2.1.0 (rendering glitches, memory leaks) |

| Hooks and lifecycle scripts for safety and automation | React-based TUI feels laggier than Codex's Rust UI |

| Sub-agents run in isolated worktrees with strict context isolation | Auto mode now interrupts with permission prompts even on long-running tasks |

| Sub-agents can be spawned automatically without explicit invocation | No personality settings, must rely on CLAUDE.md instructions |

| Wider ecosystem across desktop, mobile, and web | Sub-agent context isolation hurts research tasks that need prior context |

| UI polish instinct, behaves like a full-stack engineer | No pre-installed agent browser or skill creator skills |

| Ultrathink mode for harder problems | No native image generation in the CLI |

| More mature sub-agent integration overall |

Codex CLI (GPT 5.5)

| Pros | Cons |

|---|---|

| Significantly more token efficient (82k vs 173k on the same task) | No rewind feature for rolling back changes |

| Available on free tier | Reasoning view is less accessible than Claude Code's Ctrl+O |

| Rust-based UI stays smooth even on long sessions | Sub-agents only spawn when explicitly requested |

| YOLO mode runs without permission prompts | Smaller ecosystem, mostly terminal-focused |

| Personality config for concise, direct responses | Hooks system is less mature than Claude Code's |

| Pre-installed skills including agent browser and skill creator | UI polish on greenfield builds is weaker than Opus 4.7's |

| Sub-agents inherit full context, better continuity for research | |

| Defensive fallbacks and edge case handling, behaves like a backend engineer | |

| Native image generation inside the CLI | |

| Stronger cross-session memory across projects |

The Bottom Line

Claude Code and Codex CLI are more evenly matched in 2026 than at any point before. The right answer depends on what you are optimizing for. If you need the deepest planning, the widest ecosystem, and the strongest hooks system, Claude Code is still the more mature platform. If you want better token efficiency, smoother day-to-day usability, and an agent that handles edge cases defensively, Codex CLI has a real case to make.

The most honest summary: use Claude Code when you need Opus 4.7's reasoning depth for hard problems. Use Codex CLI for the daily workload where cost, reliability, and autonomous execution matter more than maximum depth.

If you want a structured approach to building with these tools, the Master Course on building and shipping a production-ready app covers the full AI coding workflow from first prototype to deployed product, including how to configure Claude Code with hooks and worktrees for production use. For a broader view of where these tools fit alongside Cursor best practices and AI coding workflows in 2026, the patterns transfer directly whether you are working in a CLI agent or an IDE.